Measurement Architecture for Better Decisions

Measurement architecture and decision-making

Measurement architecture and decision-making: how does the structure of measurement turn a business objective into an optimization signal?

Ingress A web service tracking report can appear clear even when the control logic of tracking is faulty. The core of the problem is rarely in reporting. The starting point of the problem is often the transfer of a business objective into a signal that guides algorithms. The central role of measurement architecture is to determine what remains visible, what can be managed, and what the data means.

Introduction

In many analytics implementations, measurement architecture becomes an accumulation of its time. It is the result of ad hoc development that has not been audited as a whole. Systems accumulate large amounts of data and reports appear credible. This is why the whole begins to look complete, even though technically functional measurement is not yet the same thing as a signal that guides business.

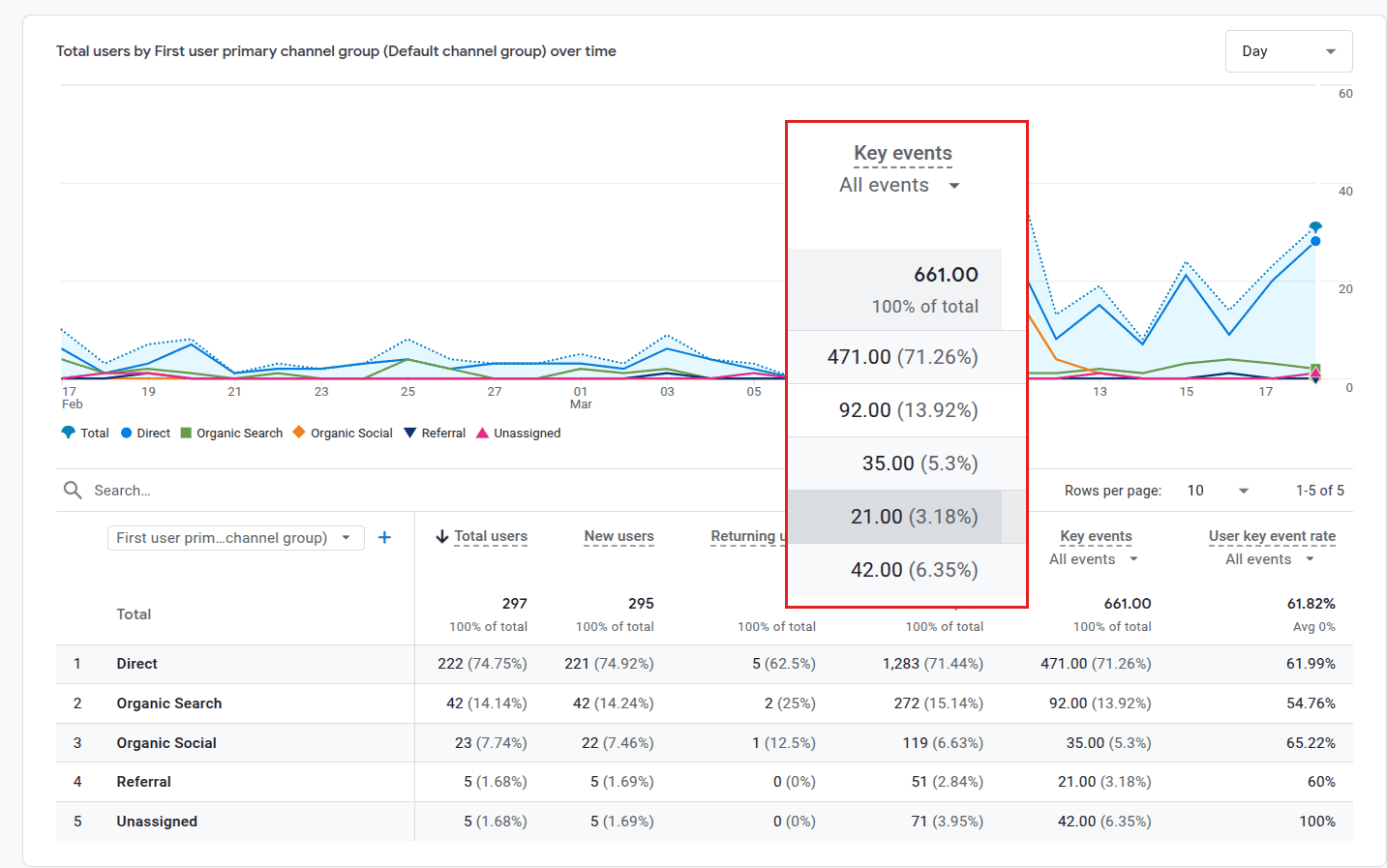

When a system optimizes mere activity, it can produce strong but business-valueless data. For example, 297 users can generate 661 key events without a single one of them describing an actual business outcome. Reporting appears correct, but optimization begins to steer the wrong thing.

In this article, I examine measurement architecture as a complete mechanism. Measurement architecture is a structure that converts a business objective into an optimization signal. Algorithmic behavior is a consequence of this signal. If the foundation of the structure is weak, algorithms begin to reinforce irrelevant behavior and distort decision-making.

I address the operating model of measurement architecture:

- The report is correct. The data faithfully reflects what it has been taught to collect.

- The system follows its instructions. Reporting describes activity because the control logic of the architecture has been set to measure actions, not outcomes.

- Structural distortion. A deficient structure in measurement architecture leads to a distorted optimization signal. When this signal guides automation, algorithms reinforce irrelevant behavior, which blinds decision-making and distorts resource allocation.

Starting point and concrete observations

Let us begin with a central and concrete observation. Consider a recent GA4 report view showing 297 active users and as many as 661 key events. The report itself appears technically flawless: data updates, channel groupings are clearly distinguishable, and “key events” accumulate at a steady rate. At first glance, this looks like functional measurement that communicates strong engagement.

In reality, the report reveals a deep structural problem. When we examine the composition of key events, we find that they do not correlate with business outcomes. Among them are certainly scrolls, file downloads, and pageview counts. All of these are behavioral signals. Although they tell about site activity, they say nothing about which actions move the company’s business forward.

This volume of 661 key events per 297 users is a textbook example of a distorted optimization signal: measurement architecture gives the impression of quality even though it optimizes mere user activity. This observation serves as the starting point for the necessity of measurement architecture.

In this case, the issue is a mismatch between measurement logic and objectives:

- The report is correct. The data reflects exactly what it has been taught to collect and report.

- The system follows its instructions. The report truthfully describes what has been set in the system. In this case, it describes behavioral activity.

- Structural problem. The structural problem is that the structure of measurement architecture does not lead to a business objective but to describing activity. When this distorted optimization signal is fed to automation, algorithms begin to reinforce irrelevant behavior. This leads to decision-making bias.

Why does tracking appear functional?

Measurement often appears functional because data accumulates in large amounts. Carefully implemented tracking reports appear clear and user counts, or at least event counts, grow. Key events are often also abundant and user counts shift from one focal point to another. This is why the whole appears functional.

The user interface reinforces this interpretation. When an event is marked as a key event, it begins to appear business-significant. The same event rises into summaries, comparisons, and optimization discussions. In this case, it is too often assumed that the visible metric describes the correct outcome.

Technically, no clear error or alarming sign is often observable. Events fire, key pages are visible, and sessions accumulate. This is why the problem remains hidden. Measurement appears functional precisely when its control logic is weak.

What is actually distorted?

The actual distortion is not formed by an individual event. The distortion is typically in the entire structure:

- The business objective does not become the correct optimization signal.

- Measurement architecture produces a signal that describes activity.

Decision-making, however, requires a signal that describes value.

- Scrolling is not a sale.

- A file download is not a lead.

- A long visit on a page is not an offer.

- Viewing multiple pages is not a decision.

In many cases, these are elevated to the level of key events. In that case, the structure begins to reward activity. As a consequence, data quality often appears sufficient even though the meaning of the signal is entirely wrong from a business perspective.

The problem worsens further if the same signal is also used for optimization. In this event chain, systems do not know the business objective — they follow the signal whose structure is given to them. The end result is that the signal describes the wrong thing and the steering of advertising or customer flows happens in the wrong direction.

A Google Analytics 4 key event tells that some action has been marked as important in reporting. Only at the point when this key event is made into, for example, a Google Ads conversion does the same signal begin to also guide bid optimization and budget allocation.

Why is the problem often misinterpreted?

I have observed that the problem is often looked for in the wrong place or from the wrong starting points. The aim is to find a missing trigger, a broken tag, or an error in a report. These can indeed be real problems. However, they do not solve the central problem.

Measurement rarely improves in the right direction by adding events. First, one must determine which event describes a business outcome. After that, other events are set as supporting signals, not as central control signals. If this distinction is not made, the organization gets more data but a weaker control model.

Decision-making layer

In this context, we are not talking about an analytics problem, because this is a decision-making problem. Measurement affects, for example, which traffic is considered good and how campaigns are managed. It also affects which problem begins to be corrected and how the correction is implemented.

If we interpret the wrong signal as correct and strong, for example in advertising, budget is simultaneously shifted to the wrong place. If activity appears as outcome, quality falls into the background. In that case, reports are dominated by a self-reinforcing volume and algorithms begin to seek more volume. In this case, decision risk does not arise in report interpretation. The realization of decision risk has already occurred in the design of measurement.

This is why measurement architecture belongs in the vocabulary of company management. It is not only a method given to an implementer for designing and implementing measurement. In this context, the whole determines what kind of signal remains available for decisions, budget, and automation. The technical implementer is often assigned the entire whole without the necessary background information. This rarely leads to the best possible outcome from a decision-making perspective.

Mechanism

Business objectives

The implementation of high-quality measurement always begins with business objectives. In the first stage, one must determine what one wants to steer. After that, one can decide what is worth measuring so that the objective is achieved. The starting point of measurement is what we want systems to tell us.

Let us take examples:

- If the objective is revenue, measurement must identify purchases.

- If the objective is a high-quality lead, measurement must identify a genuine lead.

A typical objective is profitability. In that case, value-differentiating logic is needed. Otherwise, measurement remains at the level of activity. The whole begins with the business objective. The first question is not what can be technically measured. The first question is what systems should optimize. It is wise to lock this principle as the first stage and first question in measurement architecture design.

Tip. Start from business, not from the tool.

Layers of measurement architecture

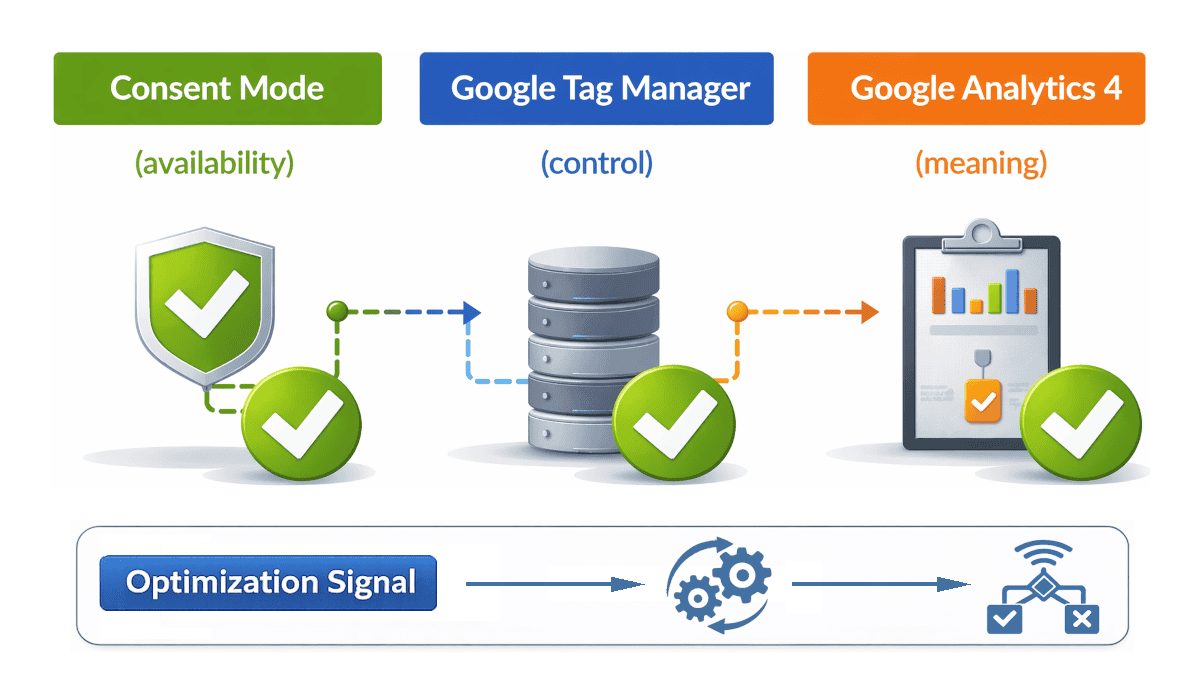

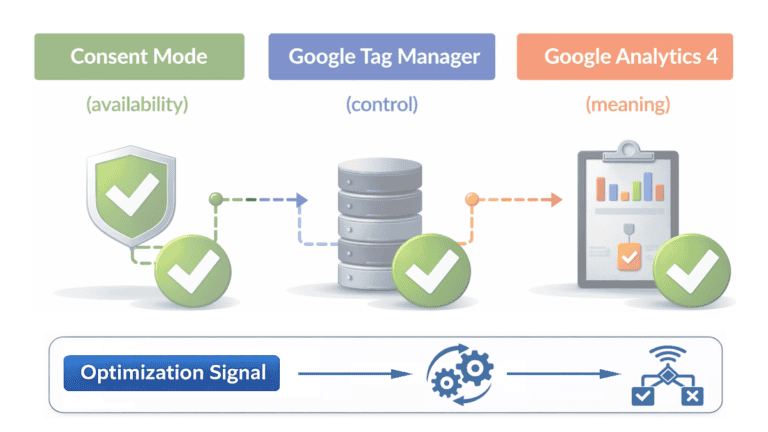

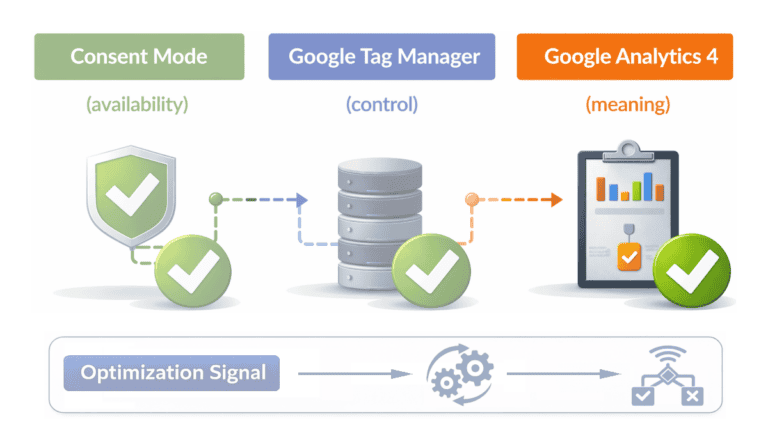

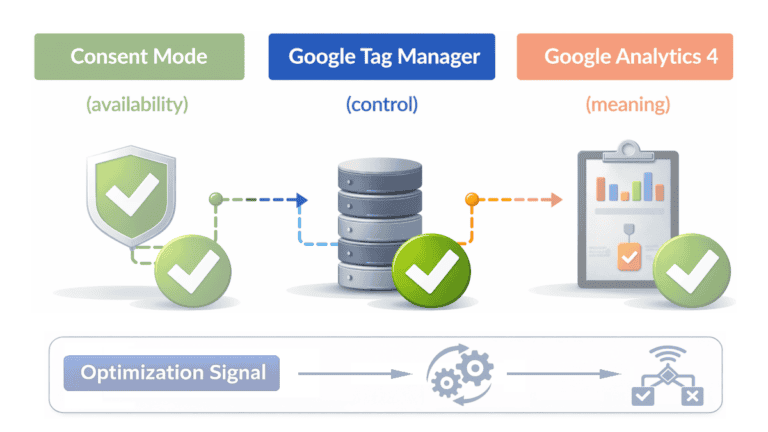

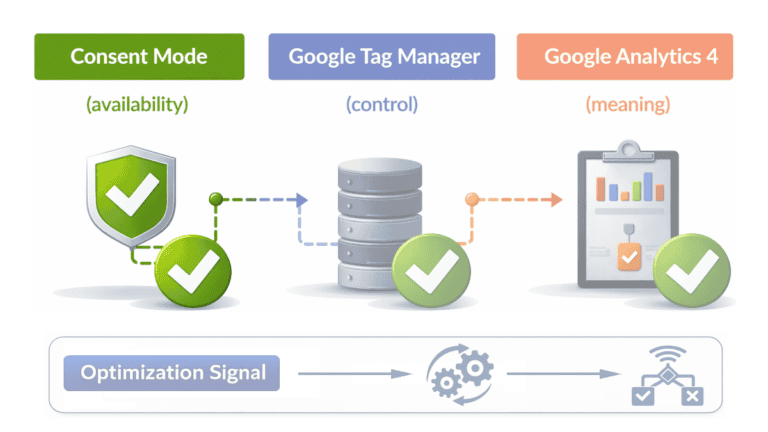

System-layer thinking is a central part of measurement architecture. It ensures that business objectives translate into algorithmic behavior. Through this, we enable more precise optimization based on actual business values, not mere technical conversions. By identifying and developing system-layer layers, we ensure that the availability, management, and meaning of optimization signals function better.

Measurement architecture consists of three complementary layers that together convert a business objective into a usable optimization signal.

Availability layer (signal availability)

Determines how much of user data collection is permitted under privacy constraints. The layer ensures that only accepted cookies and consents enable signal generation.

Control layer (signal control)

Is responsible for the precise firing of the signal: events are recorded once, at the right time, and with the correct parameters. This layer ensures that meaningful data is passed forward without unnecessary noise.

Analytics layer (signal meaning)

Translates the collected signal into business information. The layer interprets events and structures them into a metric that automation and budgeting can follow.

Although the layers are often realized in tools such as Consent Mode, Google Tag Manager, and Google Analytics 4, these are examples here, not the main subject of our discussion. Together, these layers form a clear, comprehensive measurement architecture.

All organizations that perform measurement have some kind of measurement architecture, even if not all are aware of it. It may have formed over time, or it may have been designed and implemented based on selected objectives.

Optimization signal

An optimization signal is information transmitted to systems on the basis of which algorithms select the content to be displayed, target audiences, and price levels. If the signal does not reflect business value, algorithms optimize the wrong objectives. For this reason, the optimization signal framework cannot begin from user interface measurements but from the translation of a business objective into an optimizable signal.

Signal quality is formed through measurement architecture, starting from consent (Consent Mode) and arriving at the report through carefully chosen intermediate stages.

Algorithmic behavior

Algorithmic behavior is a consequence of the signal that measurement architecture produces. Systems do not evaluate the business objective independently. They follow the signal that is given to them. This phenomenon is often described as follows: a weak input signal produces a weak outcome.

On the basis of this signal, algorithms begin to steer visibility, targeting, and pricing. At the same time, their choices generate new data that reinforces the same control logic. If the signal does not correspond to the business objective, systems begin to scale the error. If the signal describes the correct outcome, systems begin to scale the business-desired objective.

- A wrong signal is reinforced in algorithmic processing and steers resources incorrectly.

- A correct signal scales in a goal-bound manner and steers automation in the desired way.

This is why algorithmic behavior should not be examined separately from measurement architecture. The end result of measurement architecture decisively affects what systems begin to follow and learn.

This chain proceeds as follows: business objective → measurement architecture → optimization signal → algorithmic behavior.

The impact of algorithmic behavior and optimization

If the optimization signal is not based on a business objective, algorithms reinforce the error. They steer budget and automation toward easily available but distorted data. In this context, optimization can appear effective even though it deviates from actual value.

Good results are often achieved already by setting a few carefully chosen events to function as the actual control signal. Other measurement events are allowed to support this, but they must not be allowed to determine budget or automation.

The availability layer (Consent Mode) determines how much of the signal can be observed and transmitted at all. The consent implementation also determines whether this signal is formed in a managed way or partly deficiently. Modeled data as part of consent acceptance can indeed patch part of missing data, but it does not fix a weak signal or turn wrong information into correct information. In that case, it is possible that consent adds noise, uncertainty, and interpretation risk.

What should be measured and how is the signal built?

Central business objectives should be defined precisely. In e-commerce, measurement architecture should elevate the actual purchase event as the primary-level signal. In lead business, the primary-level signal should not be every form submission but only a lead that meets agreed quality criteria. Such criteria can include the correct service need, sufficient budget, the correct customer profile, or a sales-confirmed contact. All other behavior and progression should initially be left as supporting signals. Signal quality can be further improved by distinguishing higher business value leads from lower value leads. This way, measurement does not optimize mere volume but business-usable demand.

However, a purchase event alone is often not sufficient as the best optimization signal. If the system sees two purchases as equal in value even though their margin differs substantially, optimization begins to favor revenue rather than profitability. In that case, the product margin or a weighted value derived from it should be transferred via the data layer as part of the signal. This way, a higher-margin purchase receives a stronger conversion value than a low-margin purchase. The same logic applies to leads. If a lead relates to a higher-margin service, a larger project, or otherwise more valuable demand, its conversion value can also be set higher. This way, measurement begins to steer systems toward business-valuable outcomes rather than merely greater volume.

Once business objectives have been defined, the next task is to ensure the discipline and interoperability of the layers. The organization should design the availability layer so that signal is preserved as usable as possible within the limits permitted by law. The control layer should be implemented so that events are formed consistently and without unnecessary noise. The analytics layer should be designed so that the event describes the correct business outcome rather than mere activity.

The third manageable whole is phasing. The organization should describe progression as business stages rather than isolated events. In that case, data can be interpreted on the basis of whether it moves toward the objective or not. This way, measurement is not based only on an individual event but on the stage that the user represents in business terms.

The reliability of measurement architecture is not a one-time performance but requires continuous validation. I recommend regular auditing and review of policy documentation to ensure that measurement processes and collected data represent actual behavior and business values. This step is critical because it provides the opportunity to identify and correct potential errors before they distort automation and decision-making.

How to expose a wrong optimization signal

Open one important report from your web service tracking. Choose one that you use for decision-making and trust the most. Look at its three most important key events and answer the following questions in relation to this report.

- Is there an event in the report that describes a direct business outcome?

- Is decision-making based on an event that describes a business outcome or only on events that describe activity? Has other behavioral information been left as secondary instead of it steering optimization?

If you cannot answer each point clearly, the problem is not in the report. The problem is in the measurement architecture.

Business impact

Let us take a simple example. The advertising budget is 5,000 euros per month. Behavioral events accumulate in large numbers, so reporting appears strong. Assume that 60 percent of the budget begins to be directed on the basis of an activity-describing signal. In that case, 3,000 euros per month is allocated to traffic that is evaluated on the basis of activity rather than business outcome.

This does not yet prove a realized euro-denominated loss. It illustrates how wrong control logic begins to shift money in the wrong direction. When the same mechanism repeats monthly, the impact grows. In addition to advertising spend, time and money are spent on analysis, corrections, and dismantling wrong conclusions.

The most serious harm arises from the fact that the organization and systems begin to learn from the wrong signal. After that, the wrong logic begins to steer campaigns, reporting, and prioritization. This is why measurement architecture protects decision-making first and only through that, the budget.

Conclusions

Measurement architecture determines whether data steers toward the business objective or away from it. If the structure produces the wrong signal, reports can appear credible and decisions still become distorted.

The problem in that case is not the amount of data. The problem is which signal is considered important and what systems begin to optimize. When a business objective does not become the correct optimization signal, reporting, budget, and automation begin to follow the wrong thing.

This is why the task of measurement architecture is not only to collect data. Its task is to ensure that the business objective translates into the correct control signal. Only then does measurement support decision-making rather than distort it.

To guarantee trust, measurement architecture must be based on a consistent and official canonical measurement policy that serves as the project’s “Source of Truth.” This ensures that all analyses, measurement logic, and optimization signals are based on best practices, guiding business reliably beyond previous versions and technical changes.

Further reading and official sources

About key events

Consent mode overview

Get started with Tag Manager

Modeling labels for conversion value prediction

The data layer

Accessed March 19, 2026